10 – self / cross, hard / soft attention and the transformer

Published 3 years ago • 35K plays • Length 1:12:01Download video MP4

Download video MP3

Similar videos

-

1:12:01

1:12:01

nlp | attentions and transformers by alfredo canziani

-

9:57

9:57

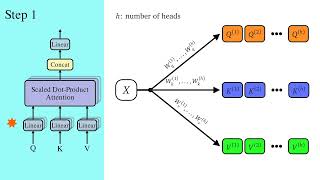

a dive into multihead attention, self-attention and cross-attention

-

15:25

15:25

visual guide to transformer neural networks - (episode 2) multi-head & self-attention

-

7:27

7:27

cross-attention (nlp817 11.9)

-

1:18:02

1:18:02

week 12 – practicum: attention and the transformer

-

12:01

12:01

transformer core saturation and imbalance

-

10:15

10:15

how autotransformers(variacs) work

-

48:06

48:06

transformers are rnns: fast autoregressive transformers with linear attention (paper explained)

-

1:14:09

1:14:09

dl 12.4.5:7 nlp: neural translation, attention models and transformers

-

22:30

22:30

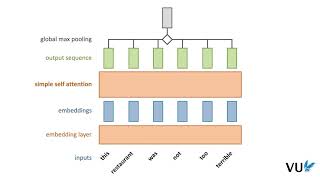

lecture 12.1 self-attention

-

21:31

21:31

efficient self-attention for transformers

-

6:25

6:25

crossvit: cross-attention multi-scale vision transformer for image classification (paper review)

-

8:37

8:37

transformers - part 7 - decoder (2): masked self-attention

-

7:34

7:34

self-attention in transfomers - part 2

-

14:32

14:32

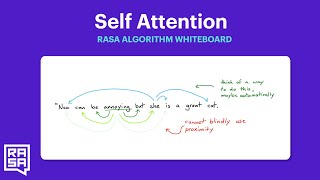

rasa algorithm whiteboard - transformers & attention 1: self attention

-

50:24

50:24

linformer: self-attention with linear complexity (paper explained)

-

39:24

39:24

intuition behind self-attention mechanism in transformer networks

-

20:12

20:12

how do transformers work? (attention is all you need)