8-bit quantisation demistyfied with transformers : a solution for reducing llm sizes

Published 1 year ago • 398 plays • Length 37:20Download video MP4

Download video MP3

Similar videos

-

13:59

13:59

bitnet: scaling 1-bit transformers for llms

-

5:13

5:13

what is llm quantization?

-

5:50

5:50

what are transformers (machine learning model)?

-

13:59

13:59

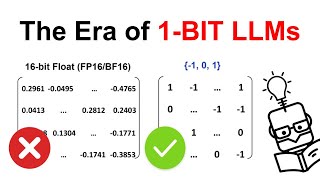

the era of 1-bit llms: all large language models are in 1.58 bits - paper explained

-

6:10

6:10

the era of 1-bit llms by microsoft | ai paper explained

-

31:51

31:51

mamba from scratch: neural nets better and faster than transformers

-

26:15

26:15

【博士vlog】2024最新模型mamba详解,transformer已死,你想知道的都在这里了!

-

6:44

6:44

how do llms work? next word prediction with the transformer architecture explained

-

14:06

14:06

mamba might just make llms 1000x cheaper...

-

5:34

5:34

how large language models work

-

0:26

0:26

llm qlora 8bit update bitsandbytes

-

26:21

26:21

how to quantize an llm with gguf or awq

-

9:11

9:11

transformers, explained: understand the model behind gpt, bert, and t5

-

0:51

0:51

bert networks in 60 seconds