a self-knowledge distillation-driven cnn-lstm model for predicting disease... - daryl fung - la 2022

Published 1 year ago • 43 plays • Length 6:53Download video MP4

Download video MP3

Similar videos

-

9:51

9:51

knowledge distillation in deep learning - basics

-

7:21

7:21

knowledge distillation in deep learning - distilbert explained

-

4:06

4:06

distilling neural networks | two minute papers #218

-

4:00

4:00

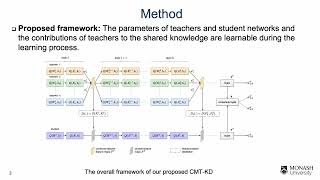

collaborative multi-teacher knowledge distillation for learning low bit-width deep neural networks

-

1:07:22

1:07:22

lecture 10 - knowledge distillation | mit 6.s965

-

1:07:26

1:07:26

lecture 10 - knowledge distillation | mit 6.s965

-

12:35

12:35

knowledge distillation: a good teacher is patient and consistent

-

5:29

5:29

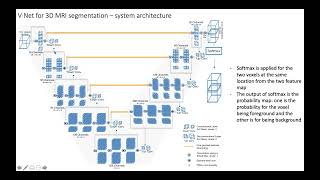

v-net for 3d mri segmentation

-

3:32

3:32

building a curious ai with random network distillation

-

1:01

1:01

driven by data

-

1:01

1:01

few sample knowledge distillation for efficient network compression

-

8:45

8:45

what is knowledge distillation? explained with example

-

2:44

2:44

live on 28th aug: knowledge distillation in deep learning

-

12:30

12:30

subclass distillation

-

17:59

17:59

a new direction in quantum processing

-

13:35

13:35

wsdm-23 paper: learning to distill graph neural networks

-

1:34:56

1:34:56

distillation made simple |distillation demystified: exploring the science and applications

-

2:10

2:10

chapter 5.8 - innovation for the fatigued

-

19:06

19:06

unsupervised network discovery for brain imaging data