deploy transformer models in the browser with #onnxruntime

Published 2 years ago • 14K plays • Length 11:02Download video MP4

Download video MP3

Similar videos

-

6:05

6:05

converting models to #onnx format

-

50:44

50:44

making neural networks run in browser with onnx - ron dagdag - ndc melbourne 2022

-

16:32

16:32

accelerate transformer inference on cpu with optimum and onnx

-

7:52

7:52

an overview of the pytorch-onnx converter

-

27:14

27:14

how large language models work, a visual intro to transformers | chapter 5, deep learning

-

6:57

6:57

pytorch model in c using onnxruntime | c advantage

-

4:46

4:46

onnx runtime

-

2:03

2:03

what is onnx runtime (ort)?

-

44:35

44:35

onnx and onnx runtime

-

11:27

11:27

onnx runtime

-

3:31

3:31

onnx runtime | tutorial-8 | open neural network exchange | onnx

-

23:41

23:41

testing a custom transformer model for language translation with onnx

-

10:18

10:18

lightning talk: streamlining model export with the new onnx exporter - maanav dalal & aaron bockover

-

11:10

11:10

converting pytorch model to onnx format and load it in the browser

-

14:25

14:25

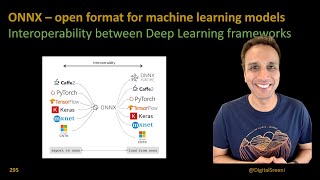

295 - onnx – open format for machine learning models

-

9:11

9:11

transformers, explained: understand the model behind gpt, bert, and t5

-

0:59

0:59

onnx runtime release 1.13 - transformer optimization overview #youtubeshorts

-

21:59

21:59

on-device training with onnx runtime

-

1:00

1:00

why transformer over recurrent neural networks

-

![[cppday20] interoperable ai: onnx & onnxruntime in c (m. arena, m.verasani)](https://i.ytimg.com/vi/exsgNLf-MyY/mqdefault.jpg) 59:08

59:08

[cppday20] interoperable ai: onnx & onnxruntime in c (m. arena, m.verasani)