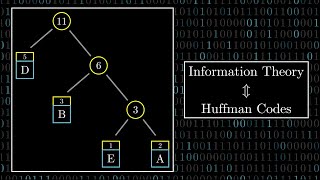

entropy is the limit of compression (huffman coding)

Published 10 years ago • 35K plays • Length 4:15Download video MP4

Download video MP3

Similar videos

-

4:36

4:36

huffman coding || easy method

-

4:43

4:43

huffman coding step-by-step example

-

10:03

10:03

huffman coding (lossless compression algorithm)

-

17:44

17:44

3.4 huffman coding - greedy method

-

12:12

12:12

entropy in compression - computerphile

-

56:58

56:58

lecture 4: entropy and data compression (iii): shannon's source coding theorem, symbol codes

-

55:10

55:10

9. huffman coding

-

18:23

18:23

these compression algorithms could halve our image file sizes (but we don't use them) #somepi

-

5:31

5:31

the shannon limit - bell labs - future impossible

-

51:51

51:51

entropy & mutual information in machine learning

-

14:06

14:06

a modified gaussian-based fuzzy c-means (fcm) clustering algorithm with total variation ...

-

29:11

29:11

huffman codes: an information theory perspective

-

49:53

49:53

2. compression: huffman and lzw

-

13:54

13:54

what is entropy? and its relation to compression

-

6:30

6:30

how computers compress text: huffman coding and huffman trees

-

40:28

40:28

the science and application of data compression algorithms

-

7:05

7:05

claude shannon's information entropy (physical analogy)

-

6:41

6:41

huffman coding

-

51:57

51:57

digital image processing i - lecture 40 - entropy and source coding

-

4:26

4:26

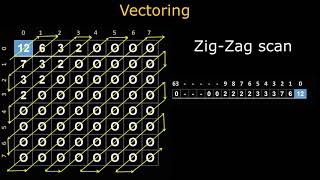

entropy encoding in jpeg - dr. j. martin leo

-

6:35

6:35

data compression using huffman coding algorithm

-

9:34

9:34

aqa computer science 3 3 8 huffman coding