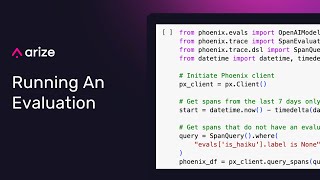

evaluating llm changes with phoenix

Published 2 months ago • 467 plays • Length 12:57Download video MP4

Download video MP3

Similar videos

-

59:05

59:05

arize ai phoenix: open-source tracing & evaluation for ai (llm/rag/agent)

-

5:07

5:07

phoenix oss: ai observability & evaluation in a notebook

-

10:49

10:49

llm evaluation: getting started

-

15:42

15:42

llm observability with arize phoenix and llamaindex

-

42:41

42:41

introducing arize-phoenix and openinference

-

31:49

31:49

advanced llm evaluation: classes of llm evals – a deep dive

-

6:36

6:36

what is retrieval-augmented generation (rag)?

-

9:54

9:54

llm evals and llm as a judge: fundamentals

-

14:59

14:59

llamaindex workflows: everything you need to get started and trace and evaluate your agent

-

47:05

47:05

building and evaluating agentic rag with vectara and arize

-

38:16

38:16

llm evaluation: creating an llm eval from scratch featuring bazaarvoice

-

46:46

46:46

llm evaluation essentials: statistical analysis of summarization llm evaluations

-

5:47

5:47

phoenix: guardrails ai integration walkthrough

-

28:37

28:37

arize phoenix open source library - ml observability in a notebook

-

53:47

53:47

llm evaluation essentials: benchmarking and analyzing retrieval approaches

-

5:12

5:12

llm app ab testing using projects

-

44:14

44:14

llm evaluation in practice: timeseries evals

-

55:48

55:48

llm evaluation with arize ai's aparna dhinakaran // mlops podcast #210

-

29:04

29:04

evaluating and tracing llm apps

-

44:22

44:22

rag time! evaluate rag with llm evals and benchmarking

-

33:50

33:50

evaluating llm-based applications