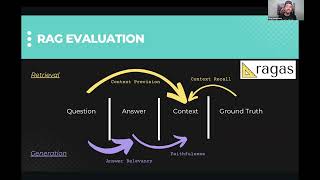

how to evaluate an llm-powered rag application automatically.

Published 8 months ago • 23K plays • Length 50:42Download video MP4

Download video MP3

Similar videos

-

33:50

33:50

evaluating llm-based applications

-

18:49

18:49

llm evaluation with mlflow and dagshub for generative ai application

-

5:18

5:18

llm evaluation basics: datasets & metrics

-

8:45

8:45

evaluate llms - rag

-

1:49

1:49

benchmarking llms explained: how to evaluate llms for your business

-

36:10

36:10

langsmith tutorial - llm evaluation for beginners

-

8:42

8:42

master llms: top strategies to evaluate llm performance

-

37:21

37:21

session 7: rag evaluation with ragas and how to improve retrieval

-

0:34

0:34

evaluating rag applications #ai #llm

-

6:36

6:36

what is retrieval-augmented generation (rag)?

-

0:29

0:29

what is an llm agent? #generativeai #llm #gpt4

-

0:59

0:59

creating datasets to evaluate your own llm?

-

19:14

19:14

learn to evaluate llms and rag approaches

-

11:25

11:25

evaluating llms using langchain

-

18:31

18:31

llm evaluation (2/2) — harnessing llms for evaluating text generation