how to mount a azure storage folder to databricks (dbfs)?

Published 1 year ago • 1.7K plays • Length 7:07Download video MP4

Download video MP3

Similar videos

-

13:51

13:51

19. mount azure blob storage to dbfs in azure databricks

-

13:00

13:00

18. create mount point using dbutils.fs.mount() in azure databricks

-

12:04

12:04

9. databricks file system(dbfs) overview in azure databricks

-

4:28

4:28

how to connect azure databricks to an azure storage account

-

9:49

9:49

how to - mount azure data lake storage - azure databricks

-

1:14:54

1:14:54

data engineering with databricks #dataengineering #databricks

-

2:47:24

2:47:24

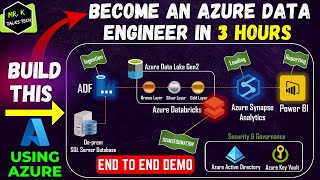

an end to end azure data engineering real time project demo | get hired as an azure data engineer

-

11:15

11:15

databricks - day 6: databricks file system (dbfs) | 30 days of databricks

-

14:49

14:49

9. how to create mount point in azure databricks | dbutils.fs.mount in databricks | databricks

-

7:00

7:00

azure databricks: create storage credentials and external locations to adls gen2

-

10:48

10:48

azure storage account: azure data lake storage (adls) vs blob storage

-

10:02

10:02

connecting azure databricks to azure blob storage

-

24:25

24:25

azure data lake storage (gen 2) tutorial | best storage solution for big data analytics in azure

-

14:43

14:43

17. databricks & pyspark: azure data lake storage integration with databricks

-

10:26

10:26

mount to azure data lake storage gen2 (adls) | azure databricks | pyspark | azure data engineer

-

15:35

15:35

30.access data lake storage gen2 or blob storage with an azure service principal in azure databricks

-

8:35

8:35

folders on blob storage aren't what they seem

-

16:46

16:46

azure storage: blob vs data lake

-

1:15:15

1:15:15

azure storage options for analytics - dustin vannoy

-

1:32

1:32

how to upload a folder in databricks

-

13:31

13:31

7. databricks file system in azure databricks | dbfs in databricks | what is databricks file system

-

11:28

11:28

how to download data from databricks (dbfs) to local system | databricks for spark | apache spark