how to run llm locally on any computer with lm studio (llama, mistral & more)

Published 4 months ago • 5.2K plays • Length 6:53Download video MP4

Download video MP3

Similar videos

-

11:12

11:12

how to run any open source llm locally in linux

-

6:55

6:55

run your own llm locally: llama, mistral & more

-

12:16

12:16

run any open-source model locally (lm studio tutorial)

-

14:11

14:11

run any open-source llm locally (no-code lmstudio tutorial)

-

11:06

11:06

llama3 一键本地部署 !无需gpu !100% 保证成功,轻松体验 meta 最新的 8b、70b ai大模型!! | 零度解说

-

14:01

14:01

deploy open llms with llama-cpp server

-

36:58

36:58

qlora—how to fine-tune an llm on a single gpu (w/ python code)

-

0:28

0:28

run llms locally with lmstudio

-

14:50

14:50

run your own chatgpt-like llm on your windows pc!

-

![how to connect local llms to crewai [ollama, llama2, mistral]](https://i.ytimg.com/vi/0ai-L50VCYU/mqdefault.jpg) 25:07

25:07

how to connect local llms to crewai [ollama, llama2, mistral]

-

7:21

7:21

running ollama on windows // run llms locally on windows w/ ollama

-

10:42

10:42

lm studio: the easiest and best way to run local llms

-

6:43

6:43

get started with mistral 7b locally in 6 minutes

-

13:11

13:11

mistral 7b 🖖 beats llama2 13b and can run on your phone??

-

1:03

1:03

llama 3 tutorial - llama 3 on windows 11 - local llm model - ollama windows install

-

6:25

6:25

running mistral ai on your machine with ollama

-

12:01

12:01

llama-cpp-python: step-by-step guide to run llms on local machine | llama-2 | mistral

-

3:47

3:47

running llms on a mac with llama.cpp

-

8:47

8:47

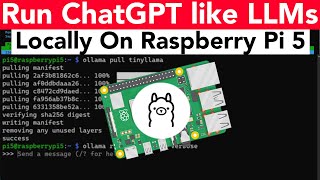

how to run chatgpt like llms on raspberry pi 5 with ollama (tinyllama, phi & more)

-

6:06

6:06

ollama: run llms locally on your computer (fast and easy)

-

0:22

0:22

run llm inside a font file