if i don't need to block crawlers, should i create a robots.txt file?

Published 12 years ago • 21K plays • Length 1:58Download video MP4

Download video MP3

Similar videos

-

1:58

1:58

if i don't need to block crawlers, should i create a robots txt file

-

1:58

1:58

if i don't need to block crawlers, should i create a robots txt file

-

2:13

2:13

should i block duplicate pages using robots.txt?

-

3:59

3:59

how to block chatgpt crawlers

-

5:03

5:03

how to fix blocked by robots.txt errors in google search console

-

2:47

2:47

can i use robots.txt to optimize googlebot's crawl?

-

![fix : discovered - currently not indexed | crawled - currently not indexed [solved]](https://i.ytimg.com/vi/tGoBfN5RB_g/mqdefault.jpg) 13:25

13:25

fix : discovered - currently not indexed | crawled - currently not indexed [solved]

-

4:46

4:46

fix robots txt blocked issue

-

19:06

19:06

english google seo office-hours from may 2023

-

9:29

9:29

what is robots.txt | explained

-

4:07

4:07

robots.txt seo optimization

-

53:02

53:02

the past, present, and future of robots.txt

-

1:56

1:56

special files in robots.txt

-

8:24

8:24

robots.txt: everything you need to know for seo

-

![robots.txt file is invalid in sitechecker or lighthouse [how to fix?]](https://i.ytimg.com/vi/0RGRjh3LQD4/mqdefault.jpg) 4:07

4:07

robots.txt file is invalid in sitechecker or lighthouse [how to fix?]

-

15:23

15:23

what is robots.txt & what can you do with it

-

7:40

7:40

how to fix the indexed though blocked by robots.txt error

-

8:58

8:58

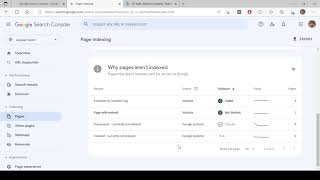

google search console: indexing issues? let's fix them | google search console part 5

-

2:53

2:53

how to block pdfs from google search using robots txt

-

1:29

1:29

google search console: how to submit a robots.txt in the new version?

-

5:36

5:36

robots.txt | lesson 8/34 | semrush academy

-

1:33

1:33

why might googlebot get errors when trying to access my robots.txt file?