kl (kullback-leibler) divergence (part 3/4): minimizing cross entropy is same as minimizing kld

Published 1 year ago • 143 plays • Length 9:46Download video MP4

Download video MP3

Similar videos

-

5:13

5:13

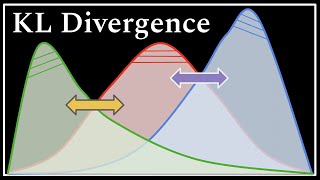

intuitively understanding the kl divergence

-

13:33

13:33

kl (kullback-leibler) divergence (part 2/4): cross entropy and kl divergence

-

6:10

6:10

entropy | cross entropy | kl divergence | quick explained

-

5:24

5:24

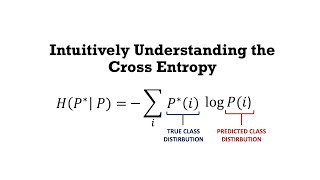

intuitively understanding the cross entropy loss

-

10:41

10:41

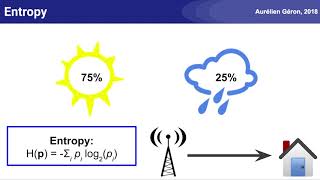

a short introduction to entropy, cross-entropy and kl-divergence

-

15:20

15:20

kl (kullback-leibler) divergence (part 4/4): jensen's inequality and why is kld always positive or 0

-

18:14

18:14

the kl divergence : data science basics

-

6:32

6:32

kl divergence and gibbs' inequality

-

13:10

13:10

kl divergence, cross entropy

-

11:15

11:15

cross entropy loss error function - ml for beginners!

-

13:57

13:57

information theory - entropy, kl divergence, cross entropy and more.

-

9:31

9:31

neural networks part 6: cross entropy

-

11:54

11:54

madl - kullback-leibler divergence

-

1:31

1:31

kullback leibler divergence - georgia tech - machine learning

-

10:08

10:08

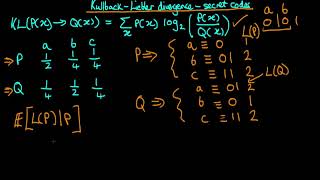

explaining the kullback-liebler divergence through secret codes

-

21:20

21:20

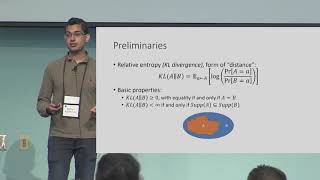

unifying computational entropies via kullback–leibler divergence

-

5:10

5:10

kullback leibler divergence between two normal pdfs

-

16:52

16:52

deep learning 20: (2) variational autoencoder : explaining kl (kullback-leibler) divergence

-

4:44

4:44

kl divergence (relative entropy)

-

11:35

11:35

kl divergence - clearly explained!

-

1:40

1:40

cross entropy