l11.4 why batchnorm works

Published 3 years ago • 2.1K plays • Length 23:38Download video MP4

Download video MP3

Similar videos

-

15:14

15:14

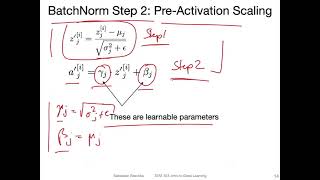

l11.2 how batchnorm works

-

8:49

8:49

batch normalization - explained!

-

8:03

8:03

l11.1 input normalization

-

6:46

6:46

l14.2: spatial dropout and batchnorm

-

13:51

13:51

batch normalization | what it is and how to implement it

-

7:32

7:32

batch normalization (“batch norm”) explained

-

2:53

2:53

l11.0 input normalization and weight initialization -- lecture overview

-

11:40

11:40

regularization in a neural network | dealing with overfitting

-

38:24

38:24

batch normalization - part 1: why bn, internal covariate shift, bn intro

-

59:01

59:01

batch (offline) rl (part 1)

-

8:41

8:41

batch size powers of 2 really necessary?

-

12:38

12:38

11.1 lecture overview (l11 model eval. part 4)

-

25:44

25:44

batch normalization: accelerating deep network training by reducing internal covariate shift

-

0:16

0:16

intro to batch normalization part 4

-

11:38

11:38

136 understanding deep learning parameters batch size

-

18:28

18:28

batch size and batch normalization in neural networks and deep learning with keras and tensorflow

-

8:55

8:55

normalizing activations in a network (c2w3l04)

-

15:48

15:48

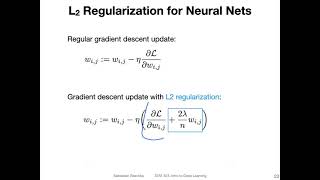

l10.4 l2 regularization for neural nets

-

48:05

48:05

how does batch normalization help optimization?

-

18:09

18:09

nn - 21 - batch normalization - theory

-

13:49

13:49

insights from finetuning llms with low-rank adaptation

-

16:27

16:27

batch normalizing