l8.4 logits and cross entropy

Published 3 years ago • 8.3K plays • Length 6:48Download video MP4

Download video MP3

Similar videos

-

15:35

15:35

l8.7.1 onehot encoding and multi-category cross entropy

-

15:05

15:05

l8.7.2 onehot encoding and multi-category cross entropy -- code example

-

19:57

19:57

l8.3 logistic regression loss derivative and training

-

18:43

18:43

dive into deep learning - lecture 4: logistic/softmax regression and cross entropy loss with pytorch

-

5:11

5:11

enhancing vessel continuity in dl based segmentation using maximum intensity projection as loss

-

5:24

5:24

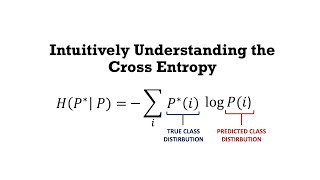

intuitively understanding the cross entropy loss

-

9:31

9:31

neural networks part 6: cross entropy

-

34:52

34:52

julien gorenflot – transient absorption for opv: why? how? and what?

-

4:13

4:13

cross entropy (deep dive equation and intuitive understanding)

-

13:49

13:49

insights from finetuning llms with low-rank adaptation

-

17:32

17:32

l8.6 multinomial logistic regression / softmax regression

-

17:28

17:28

sparse expert models: past and future

-

5:21

5:21

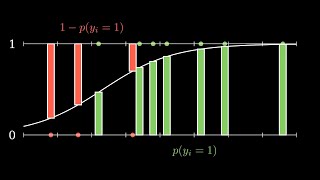

understanding binary cross-entropy / log loss in 5 minutes: a visual explanation

-

1:40

1:40

cross entropy

-

19:03

19:03

l8.5 logistic regression in pytorch -- code example

-

27:04

27:04

a matrix-free fix-propagate-and-project heuristic for milps | mathieu besançon | juliacon 2022

-

6:33

6:33

unit 4.1 | logistic regression for multiple classes | part 5 | the cross entropy loss function

-

55:11

55:11

dl4cv@wis (spring 2021) tutorial 1: linear regression & softmax classifier