positional embeddings in transformers explained | demystifying positional encodings.

Published 2 years ago • 64K plays • Length 9:40Download video MP4

Download video MP3

Similar videos

-

9:21

9:21

adding vs. concatenating positional embeddings & learned positional encodings

-

11:54

11:54

positional encoding in transformer neural networks explained

-

19:48

19:48

transformers explained | the architecture behind llms

-

13:02

13:02

stanford xcs224u: nlu i contextual word representations, part 3: positional encoding i spring 2023

-

10:18

10:18

self-attention with relative position representations – paper explained

-

9:33

9:33

positional encoding and input embedding in transformers - part 3

-

5:36

5:36

how positional encoding in transformers works?

-

9:09

9:09

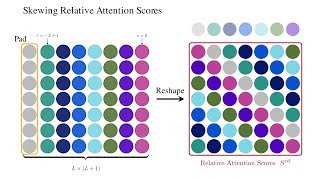

relative self-attention explained

-

16:33

16:33

transformers explained - how transformers work

-

0:57

0:57

what is positional encoding in transformer?

-

10:08

10:08

the transformer neural network architecture explained. “attention is all you need”

-

11:10

11:10

swin transformer paper animated and explained

-

2:13

2:13

postitional encoding

-

36:15

36:15

transformer neural networks, chatgpt's foundation, clearly explained!!!

-

6:21

6:21

transformer positional embeddings with a numerical example.

-

19:29

19:29

positional encodings in transformers (nlp817 11.5)

-

0:49

0:49

what and why position encoding in transformer neural networks

-

5:26

5:26

an image is worth 16x16 words: vit | vision transformer explained

-

1:05

1:05

chatgpt transformer positional embeddings in 60 seconds