relu activation function - rectified linear unit activation function - deep learning - #moein

Published 2 years ago • 383 plays • Length 7:24Download video MP4

Download video MP3

Similar videos

-

2:46

2:46

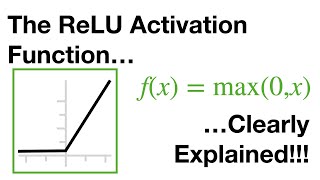

relu activation function - deep learning dictionary

-

8:58

8:58

neural networks pt. 3: relu in action!!!

-

8:43

8:43

leaky relu activation function - leaky rectified linear unit function - deep learning - #moein

-

8:29

8:29

relu leaky relu parametric relu activation functions solved example machine learning mahesh huddar

-

6:43

6:43

activation functions in neural networks explained | deep learning tutorial

-

12:23

12:23

tutorial 10- activation functions rectified linear unit(relu) and leaky relu part 2

-

5:46

5:46

why rectified linear unit (relu) is required in cnn? | relu layer in cnn

-

9:01

9:01

why relu is better than other activation functions | tanh saturating gradients

-

8:59

8:59

which activation function should i use?

-

8:58

8:58

5. neural network parameters (weights, bias, activation function, learning rate)

-

2:16

2:16

types of activation functions - deep learning - #moein

-

12:05

12:05

what is activation function in neural network ? types of activation function in neural network

-

10:05

10:05

activation functions - explained!

-

21:52

21:52

rectified linear units (relu) activation function | deep learning in kannada #21

-

3:19

3:19

5.10 rectified linear unit(relu) activation function in tamil

-

11:11

11:11

deep learning | rectified linear unit

-

44:52

44:52

activation functions in deep learning | sigmoid, tanh and relu activation function

-

5:36

5:36

why non-linear activation functions (c1w3l07)

-

4:49

4:49

44: relu activation | tensorflow | tutorial

-

5:49

5:49

activation function - feedforward neural networks (deep learning)