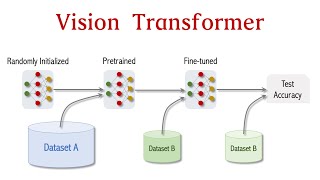

vision transformers (vit) explained fine-tuning in python

Published 1 year ago • 56K plays • Length 30:27Download video MP4

Download video MP3

Similar videos

-

6:36

6:36

visualizing the self-attention head of the last layer in dino vit: a unique perspective on vision ai

-

5:34

5:34

attention mechanism: overview

-

13:44

13:44

vision transformers explained

-

15:25

15:25

visual guide to transformer neural networks - (episode 2) multi-head & self-attention

-

14:47

14:47

vision transformer for image classification

-

4:44

4:44

self-attention in deep learning (transformers) - part 1

-

39:13

39:13

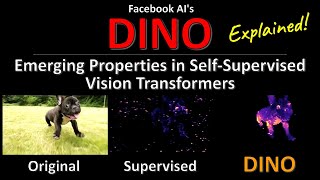

dino: emerging properties in self-supervised vision transformers (facebook ai research explained)

-

36:45

36:45

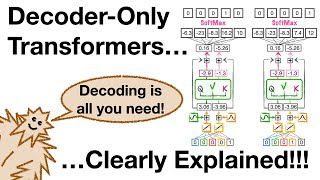

decoder-only transformers, chatgpts specific transformer, clearly explained!!!

-

30:56

30:56

dino horror for the first time every (tempus triad demo)

-

10:38

10:38

what is masked multi headed attention ? explained for beginners

-

31:54

31:54

dino: emerging properties in self-supervised vision transformers | paper explained!

-

15:01

15:01

illustrated guide to transformers neural network: a step by step explanation

-

1:14:19

1:14:19

efficientml.ai lecture 14 - vision transformer (mit 6.5940, fall 2023)

-

0:46

0:46

coding multihead attention for transformer neural networks

-

34:58

34:58

fastervit: fast vision transformers with hierarchical attention

-

30:49

30:49

vision transformer basics

-

20:39

20:39

dinov2 explained: visual model insights & comprehensive code guide

-

8:14

8:14

dino: emerging properties in self-supervised vision transformers (paper illustrated)

-

5:54

5:54

visualize the transformers multi-head attention in action

-

58:04

58:04

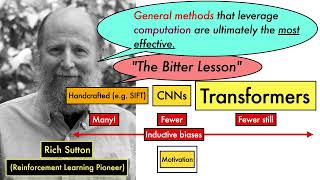

attention is all you need (transformer) - model explanation (including math), inference and training

-

13:49

13:49

how dino learns to see the world - paper explained