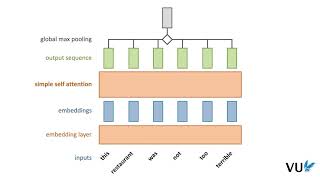

self-attention in deep learning (transformers) - part 1

Published 3 years ago • 49K plays • Length 4:44Download video MP4

Download video MP3

Similar videos

-

26:10

26:10

attention in transformers, visually explained | chapter 6, deep learning

-

15:02

15:02

self attention in transformer neural networks (with code!)

-

7:34

7:34

self-attention in transfomers - part 2

-

5:34

5:34

attention mechanism: overview

-

15:01

15:01

illustrated guide to transformers neural network: a step by step explanation

-

18:08

18:08

transformer neural networks derived from scratch

-

1:11:41

1:11:41

stanford cs25: v2 i introduction to transformers w/ andrej karpathy

-

1:12:01

1:12:01

10 – self / cross, hard / soft attention and the transformer

-

14:32

14:32

rasa algorithm whiteboard - transformers & attention 1: self attention

-

27:14

27:14

but what is a gpt? visual intro to transformers | chapter 5, deep learning

-

0:58

0:58

coding self attention in transformer neural networks

-

36:15

36:15

transformer neural networks, chatgpt's foundation, clearly explained!!!

-

22:30

22:30

lecture 12.1 self-attention

-

0:51

0:51

bert networks in 60 seconds

-

15:25

15:25

visual guide to transformer neural networks - (episode 2) multi-head & self-attention

-

1:23:24

1:23:24

self attention in transformers | deep learning | simple explanation with code!

-

21:31

21:31

efficient self-attention for transformers

-

0:58

0:58

5 concepts in transformer neural networks (part 1)

-

58:04

58:04

attention is all you need (transformer) - model explanation (including math), inference and training

-

4:30

4:30

attention mechanism in a nutshell

-

0:45

0:45

cross attention vs self attention

-

0:34

0:34

lets code the transformer encoder