self-attention using scaled dot-product approach

Published 1 year ago • 15K plays • Length 16:09Download video MP4

Download video MP3

Similar videos

-

28:47

28:47

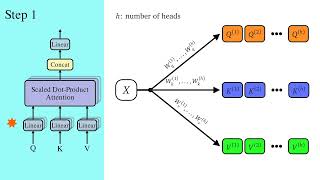

1a - scaled dot product attention explained (transformers) #transformers #neuralnetworks

-

16:09

16:09

l19.4.2 self-attention and scaled dot-product attention

-

5:34

5:34

attention mechanism: overview

-

35:08

35:08

self-attention mechanism explained | self-attention explained | scaled dot product attention

-

9:57

9:57

a dive into multihead attention, self-attention and cross-attention

-

21:31

21:31

efficient self-attention for transformers

-

4:44

4:44

self-attention in deep learning (transformers) - part 1

-

26:10

26:10

attention in transformers, visually explained | chapter 6, deep learning

-

15:14

15:14

scaled dot product attention explained implemented

-

0:41

0:41

what are self attention vectors?

-

15:51

15:51

attention for neural networks, clearly explained!!!

-

11:13

11:13

transformer attention is all you need | scaled dot product attention models | self attention part 1

-

4:30

4:30

attention mechanism in a nutshell

-

7:01

7:01

pytorch for beginners #29 | transformer model: multiheaded attention - scaled dot-product

-

0:57

0:57

self attention vs multi-head self attention

-

0:58

0:58

5 concepts in transformer neural networks (part 1)

-

36:15

36:15

transformer neural networks, chatgpt's foundation, clearly explained!!!

-

15:06

15:06

how to explain q, k and v of self attention in transformers (bert)?