[weightwatcher] self-regularization in deep neural networks: evidence from random matrix theory

Published Streamed 4 years ago • 763 plays • Length 1:02:48Download video MP4

Download video MP3

Similar videos

-

9:43

9:43

regularization (c2w1l04)

-

16:53

16:53

efficient ibe with tight reduction to standard assumption in the multi challenge setting;

-

39:49

39:49

adjoint-free variational data assimilation and model order reduce techniques

-

37:22

37:22

12 august 2019, deep learning opening workshop: horseshoe regularization in complex and deep mod...

-

4:53

4:53

innovating in the age of digital transformation

-

2:15:57

2:15:57

efficient large-scale ai workshop | session 2: training and inference efficiency

-

15:04

15:04

data preprocessing: column standardization-dimensionality reduction lecture 7@ applied ai course

-

5:44

5:44

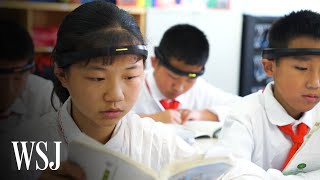

how china is using artificial intelligence in classrooms | wsj

-

3:34

3:34

aistats 2021: calibrated adaptive probabilistic ode solvers

-

16:43

16:43

j. shi | an optimal estimator of intrinsic alignments for star-forming galaxies

-

2:46

2:46

normalized inputs and initial weights

-

1:31

1:31

will automation for ir measurements work for you?

-

4:10

4:10

weight initialization for deep feedforward neural networks

-

1:48:19

1:48:19

standardization ensuring trustworthy digital society enabled by ai tech pt. 2 | ai for good webinars

-

3:47

3:47

idimension 100 - configuring a flat base or smooth scale

-

10:23

10:23

the efficiency misnomer | size does not matter | what does the number of parameters mean in a model?

-

3:56

3:56

attribution-aware weight transfer: a warm-start initialization for class-incremental semantic segme

-

45:13

45:13

lecture 25 - ai model efficiencytoolkit (aimet) | mit 6.s965

-

30:07

30:07

reproducible results with weights&biases