word2vec papers explained from scratch: skip-gram with negative sampling

Published 3 years ago • 10K plays • Length 13:09Download video MP4

Download video MP3

Similar videos

-

16:12

16:12

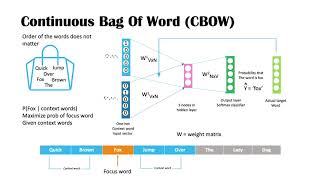

word embedding and word2vec, clearly explained!!!

-

7:21

7:21

word2vec - skipgram and cbow

-

19:27

19:27

what is word2vec? how does it work? cbow and skip-gram

-

11:54

11:54

negative sampling

-

3:25

3:25

10# how skipgram works | word2vec | nlp

-

18:28

18:28

what is word2vec? a simple explanation | deep learning tutorial 41 (tensorflow, keras & python)

-

1:18:17

1:18:17

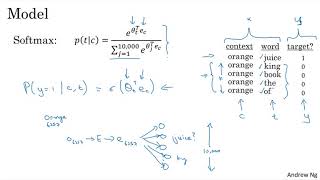

lecture 2 | word vector representations: word2vec

-

28:51

28:51

semantics with word2vec | the skip-gram model, some probability, and softmax activation

-

1:10:26

1:10:26

language model overview: from word2vec to bert

-

16:56

16:56

vectoring words (word embeddings) - computerphile

-

14:09

14:09

word2vec simplified|word2vec explained in simple language|cbow and skipgrm methods in word2vec

-

1:00

1:00

how word2vec works (cbow & skip-gram, in 60 seconds)

-

17:17

17:17

a complete overview of word embeddings

-

7:59

7:59

coding word2vec : natural language processing

-

5:02

5:02

#216 - mastering word2vec: understanding cbow, skip-gram, and negative sampling

-

14:10

14:10

skip-gram model to derive word vectors

-

34:16

34:16

nlp -word2vec model - python demo using skip gram model

-

8:44

8:44

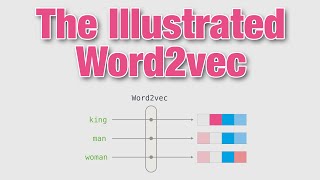

the illustrated word2vec - a gentle intro to word embeddings in machine learning

-

20:10

20:10

machine learning 53: skip-gram

-

11:54

11:54

negative sampling - sequence models