aligning llms with direct preference optimization

Published Streamed 6 months ago • 24K plays • Length 58:07Download video MP4

Download video MP3

Similar videos

-

8:55

8:55

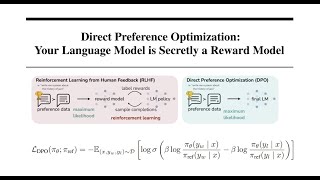

direct preference optimization: your language model is secretly a reward model | dpo paper explained

-

9:10

9:10

direct preference optimization: forget rlhf (ppo)

-

21:15

21:15

direct preference optimization (dpo) - how to fine-tune llms directly without reinforcement learning

-

36:25

36:25

direct preference optimization (dpo): your language model is secretly a reward model explained

-

5:55

5:55

what do you know about llm | what is large language model | what is llm ai

-

6:40

6:40

should you use open source large language models?

-

13:56

13:56

everything is open source if you can reverse engineer (try it right now!)

-

1:00

1:00

direct preference optimization in one minute

-

11:35

11:35

direct preference optimization (dpo): how it works and how it topped an llm eval leaderboard

-

5:08

5:08

llm alignment methods - dpo vs ipo vs kto vs pcl

-

8:00

8:00

direct preference optimization (dpo): a low cost alternative to train llm models

-

7:51

7:51

llm training process with direct preference optimization (dpo) and bypass reward model (part3)

-

48:46

48:46

direct preference optimization (dpo) explained: bradley-terry model, log probabilities, math

-

![[qa] self-play preference optimization for language model alignment](https://i.ytimg.com/vi/4tFVUxC5Nlk/mqdefault.jpg) 10:24

10:24

[qa] self-play preference optimization for language model alignment

-

5:27

5:27

direct preference optimization or dpo is out and tr-dpo is in ? | new llm paper

-

39:46

39:46

#mastering llm alignment and preference optimization llama3 llm

-

6:18

6:18

4 ways to align llms: rlhf, dpo, kto, and orpo

-

14:36

14:36

penjelasan metode dpo (direct preference optimization) dalam proses alignment llm

-

1:01:56

1:01:56

direct preference optimization (dpo)

-

8:00

8:00

direct preference optimization (dpo) of llms to reduce toxicity

-

24:05

24:05

orpo: new dpo alignment and sft method for llm

-

0:53

0:53

dpo x llm fine-tuning #machinelearning #llm #chatgpt