direct preference optimization (dpo) - how to fine-tune llms directly without reinforcement learning

Published 1 month ago • 3.4K plays • Length 21:15Download video MP4

Download video MP3

Similar videos

-

8:55

8:55

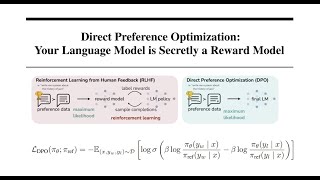

direct preference optimization: your language model is secretly a reward model | dpo paper explained

-

58:07

58:07

aligning llms with direct preference optimization

-

9:10

9:10

direct preference optimization: forget rlhf (ppo)

-

36:25

36:25

direct preference optimization (dpo): your language model is secretly a reward model explained

-

1:01:56

1:01:56

direct preference optimization (dpo)

-

8:00

8:00

direct preference optimization (dpo) of llms to reduce toxicity

-

15:21

15:21

prompt engineering, rag, and fine-tuning: benefits and when to use

-

6:36

6:36

what is retrieval-augmented generation (rag)?

-

6:08

6:08

generative ai 101: when to use rag vs fine tuning?

-

0:53

0:53

when do you use fine-tuning vs. retrieval augmented generation (rag)? (guest: harpreet sahota)

-

32:26

32:26

demystifying llm training and optimisation for analytics

-

5:08

5:08

llm alignment methods - dpo vs ipo vs kto vs pcl