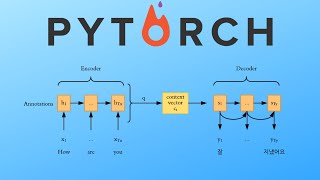

explore sequence-to-sequence with attention for text summarization

Published 5 years ago • 4K plays • Length 10:39Download video MP4

Download video MP3

Similar videos

-

16:50

16:50

sequence-to-sequence (seq2seq) encoder-decoder neural networks, clearly explained!!!

-

11:54

11:54

seq2seq with attention (machine translation with deep learning)

-

13:22

13:22

sequence to sequence learning with neural networks| encoder and decoder in-depth intuition

-

24:51

24:51

attention for rnn seq2seq models (1.25x speed recommended)

-

56:46

56:46

sequence-to-sequence learning in text summarization

-

9:06

9:06

how to make a text summarizer - intro to deep learning #10

-

1:20:48

1:20:48

lecture 17 | sequence to sequence: attention models

-

13:04

13:04

building an ai search engine using gpt 3.5 and milvus vector db 🤖

-

36:16

36:16

the math behind attention: keys, queries, and values matrices

-

14:55

14:55

8 insar - unwrapping - exporting and unwrapping

-

4:36

4:36

seq2seq

-

1:14:34

1:14:34

(old) lecture 17 | sequence-to-sequence models with attention

-

1:00:38

1:00:38

abstractive text summarization using sequence-to-sequence rnns and beyond | tdls

-

50:55

50:55

pytorch seq2seq tutorial for machine translation

-

![[nus cs6101 deep learning for nlp] s recess - machine translation, seq2seq and attention](https://i.ytimg.com/vi/cTkZNmnla7c/mqdefault.jpg) 1:36:37

1:36:37

[nus cs6101 deep learning for nlp] s recess - machine translation, seq2seq and attention

-

7:37

7:37

how to do text summarization

-

1:09:20

1:09:20

s18 sequence to sequence models: attention models

-

25:19

25:19

pytorch seq2seq with attention for machine translation

-

59:18

59:18

redesiging neural architectures for sequence to sequence learning