the math behind attention: keys, queries, and values matrices

Published 10 months ago • 214K plays • Length 36:16Download video MP4

Download video MP3

Similar videos

-

21:02

21:02

the attention mechanism in large language models

-

5:34

5:34

attention mechanism: overview

-

58:04

58:04

attention is all you need (transformer) - model explanation (including math), inference and training

-

0:42

0:42

serrano.academy - the art of understanding

-

44:26

44:26

what are transformer models and how do they work?

-

1:12:01

1:12:01

10 – self / cross, hard / soft attention and the transformer

-

9:57

9:57

a dive into multihead attention, self-attention and cross-attention

-

18:08

18:08

transformer neural networks derived from scratch

-

15:51

15:51

attention for neural networks, clearly explained!!!

-

4:30

4:30

attention mechanism in a nutshell

-

15:25

15:25

visual guide to transformer neural networks - (episode 2) multi-head & self-attention

-

15:02

15:02

self attention in transformer neural networks (with code!)

-

9:11

9:11

transformers, explained: understand the model behind gpt, bert, and t5

-

16:09

16:09

self-attention using scaled dot-product approach

-

15:59

15:59

multi head attention in transformer neural networks with code!

-

1:11:53

1:11:53

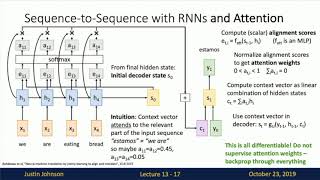

lecture 13: attention

-

9:34

9:34

what is attention in language models?

-

13:37

13:37

what are transformer models and how do they work?

-

4:44

4:44

self-attention in deep learning (transformers) - part 1