log loss or cross-entropy cost function in logistic regression

Published 5 years ago • 47K plays • Length 8:42Download video MP4

Download video MP3

Similar videos

-

5:21

5:21

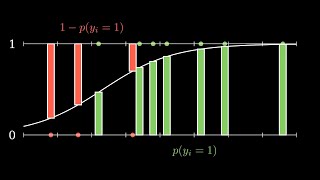

understanding binary cross-entropy / log loss in 5 minutes: a visual explanation

-

10:22

10:22

binary cross entropy explained | what is binary cross entropy | log loss function explained

-

5:24

5:24

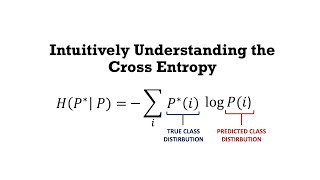

intuitively understanding the cross entropy loss

-

8:00

8:00

logistic regression 4 cross entropy loss

-

29:13

29:13

7.2.3. loss function and cost function for logistic regression

-

6:22

6:22

logistic regression cost function | machine learning | simply explained

-

8:12

8:12

logistic regression cost function (c1w2l03)

-

9:31

9:31

neural networks part 6: cross entropy

-

8:30

8:30

loss functions - explained!

-

8:13

8:13

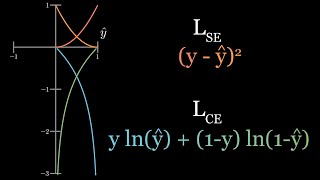

why do we need cross entropy loss? (visualized)

-

![what is the meaning of cross entropy/ log loss as cost function for classification? [lecture 2.6]](https://i.ytimg.com/vi/NatsNt6lQ1Y/mqdefault.jpg) 10:19

10:19

what is the meaning of cross entropy/ log loss as cost function for classification? [lecture 2.6]

-

19:24

19:24

introduction to logistic regression | mathematics behind | log-of-odds | cross-entropy loss | mle

-

2:18

2:18

basic intuition of logistic regression

-

![logistic regression [simply explained]](https://i.ytimg.com/vi/C5268D9t9Ak/mqdefault.jpg) 14:22

14:22

logistic regression [simply explained]

-

37:12

37:12

logistic regression - binary entropy cost function and gradient

-

6:48

6:48

l8.4 logits and cross entropy

-

18:29

18:29

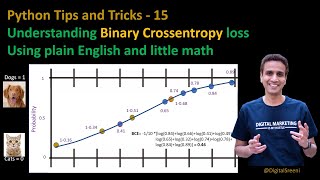

tips tricks 15 - understanding binary cross-entropy loss

-

0:38

0:38

logistic regression loss function

-

11:40

11:40

categorical/binary cross-entropy loss, softmax loss, logistic loss and all those confusing names