making llms smarter: rag efficient vector search explained

Published 1 month ago • 50 plays • Length 0:53Download video MP4

Download video MP3

Similar videos

-

0:53

0:53

making llms smarter: rag efficient vector search explained

-

1:16:08

1:16:08

genai, rag, vector databases: bridging last year's evolution with 2024's vision

-

1:11:47

1:11:47

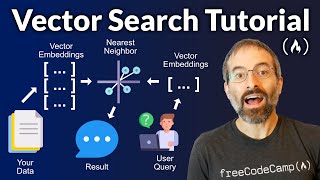

vector search rag tutorial – combine your data with llms with advanced search

-

2:33:11

2:33:11

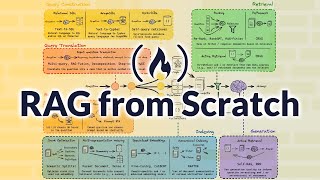

learn rag from scratch – python ai tutorial from a langchain engineer

-

10:24

10:24

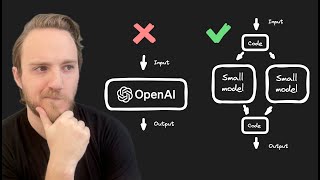

training your own ai model is not as hard as you (probably) think

-

21:14

21:14

building a rag based llm app and deploying it in 20 minutes

-

9:41

9:41

what is retrieval augmented generation (rag) - augmenting llms with a memory

-

18:35

18:35

building production-ready rag applications: jerry liu

-

34:22

34:22

how to build multimodal retrieval-augmented generation (rag) with gemini

-

2:53

2:53

build a large language model ai chatbot using retrieval augmented generation

-

0:36

0:36

advanced chucking strategy for rag #llms #ai

-

33:44

33:44

index 2024 keynote: the future of search and ai applications

-

5:40:59

5:40:59

local retrieval augmented generation (rag) from scratch (step by step tutorial)

-

10:00

10:00

open source rag running llms locally with ollama

-

0:58

0:58

prompt engineering: rag or not? how to instruct llms for optimal answers

-

53:55

53:55

optimizing rag with llms: exploring chunking techniques and reranking for enhanced results

-

0:39

0:39

🤯the tech behind ai girlfriends: rag (retrieval augmented generation)

-

5:45

5:45

retrieval augmented generation with openai/gpt and chroma