optimizing llm retrieval strategies with arize ai & qdrant

Published 3 months ago • 432 plays • Length 58:43Download video MP4

Download video MP3

Similar videos

-

5:26

5:26

prompt playground

-

53:55

53:55

optimizing rag with llms: exploring chunking techniques and reranking for enhanced results

-

1:00:14

1:00:14

llm search & retrieval systems with arize and llamaindex: powering llms on your proprietary data

-

6:36

6:36

what is retrieval-augmented generation (rag)?

-

54:58

54:58

prompt templates, functions and prompt window management

-

1:11:47

1:11:47

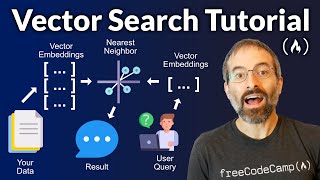

vector search rag tutorial – combine your data with llms with advanced search

-

12:57

12:57

evaluating llm changes with phoenix

-

53:47

53:47

llm evaluation essentials: benchmarking and analyzing retrieval approaches

-

17:34

17:34

gorilla llm: teach llms to use tools at scale

-

25:43

25:43

how promptlayer adapts llm evaluation strategies

-

28:37

28:37

building llm evals from scratch

-

1:16:47

1:16:47

fine tuning and evaluating llms with anyscale and arize

-

5:47

5:47

arize onboarding: llm tracing

-

0:22

0:22

performance tracing with arize ai - find and fix model problems faster

-

38:31

38:31

how to efficiently fine-tune and serve oss llms

-

55:01

55:01

building and troubleshooting an advanced llm query engine

-

5:51

5:51

ep 30. llm rag optimization patterns