rope rotary position embedding to 100k context length

Published 2 months ago • 2.6K plays • Length 39:56Download video MP4

Download video MP3

Similar videos

-

14:06

14:06

rope (rotary positional embeddings) explained: the positional workhorse of modern llms

-

11:17

11:17

rotary positional embeddings: combining absolute and relative

-

35:53

35:53

how to code long-context llm: longlora explained on llama 2 100k

-

29:17

29:17

extending context window of large language models via positional interpolation explained

-

39:52

39:52

roformer: enhanced transformer with rotary position embedding explained

-

30:18

30:18

rotary positional embeddings

-

20:51

20:51

openplc on a ul-listed industrial raspberry pi

-

18:35

18:35

14 transformer之位置编码positional encoding (为什么 self-attention 需要位置编码)

-

35:01

35:01

rotary positional embeddings with code: easy explanation, no mathematics

-

1:21

1:21

transformer architecture: fast attention, rotary positional embeddings, and multi-query attention

-

1:10:55

1:10:55

llama explained: kv-cache, rotary positional embedding, rms norm, grouped query attention, swiglu

-

9:40

9:40

positional embeddings in transformers explained | demystifying positional encodings.

-

58:30

58:30

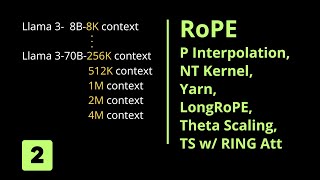

longrope & theta scaling to 1 mio token (2/2)

-

13:02

13:02

stanford xcs224u: nlu i contextual word representations, part 3: positional encoding i spring 2023

-

28:00

28:00

extending context window of large language models via position interpolation

-

19:49

19:49

why do llm’s have context limits? how can we increase the context? alibi and landmark attention!

-

0:55

0:55

position encoding details in transformer neural networks

-

9:21

9:21

adding vs. concatenating positional embeddings & learned positional encodings

-

0:49

0:49

what and why position encoding in transformer neural networks

-

0:59

0:59

top_p in llm settings explained — prompt engineering course #generativemodels #languagemodels