direct preference optimization (dpo) in ai

Published 8 months ago • 426 plays • Length 5:12Download video MP4

Download video MP3

Similar videos

-

8:55

8:55

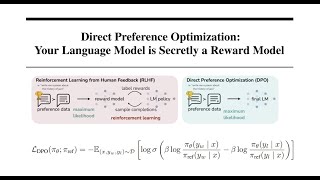

direct preference optimization: your language model is secretly a reward model | dpo paper explained

-

58:07

58:07

aligning llms with direct preference optimization

-

48:46

48:46

direct preference optimization (dpo) explained: bradley-terry model, log probabilities, math

-

9:10

9:10

direct preference optimization: forget rlhf (ppo)

-

19:38

19:38

reinforcement learning from human feedback (rlhf) & direct preference optimization (dpo) explained

-

1:01:56

1:01:56

direct preference optimization (dpo)

-

21:15

21:15

direct preference optimization (dpo) - how to fine-tune llms directly without reinforcement learning

-

36:25

36:25

direct preference optimization (dpo): your language model is secretly a reward model explained

-

36:45

36:45

decoder-only transformers, chatgpts specific transformer, clearly explained!!!

-

13:26

13:26

proximal policy optimization | chatgpt uses this

-

1:01:33

1:01:33

mit 6.s192 - lecture 22: diffusion probabilistic models, jascha sohl-dickstein

-

11:35

11:35

direct preference optimization (dpo): how it works and how it topped an llm eval leaderboard

-

1:00

1:00

direct preference optimization in one minute

-

5:27

5:27

direct preference optimization or dpo is out and tr-dpo is in ? | new llm paper

-

8:00

8:00

direct preference optimization (dpo) of llms to reduce toxicity

-

7:51

7:51

llm training process with direct preference optimization (dpo) and bypass reward model (part3)

-

53:03

53:03

dpo - part1 - direct preference optimization paper explanation | dpo an alternative to rlhf??

-

42:49

42:49

direct preference optimization (dpo)

-

8:00

8:00

direct preference optimization (dpo): a low cost alternative to train llm models

-

0:54

0:54

direct preference optimization (dpo)

-

41:21

41:21

dpo - part2 - direct preference optimization implementation using trl | dpo an alternative to rlhf??

-

47:55

47:55

dpo : direct preference optimization