keyword transformer: a self-attention model for keyword spotting

Published 2 years ago • 1.4K plays • Length 6:06Download video MP4

Download video MP3

Similar videos

-

26:14

26:14

keyword transformer: a self-attention model for keyword spotting

-

9:37

9:37

vision transformer attention

-

4:44

4:44

self-attention in deep learning (transformers) - part 1

-

5:34

5:34

attention mechanism: overview

-

49:32

49:32

transformer

-

7:34

7:34

self-attention in transfomers - part 2

-

16:09

16:09

self-attention using scaled dot-product approach

-

15:51

15:51

attention for neural networks, clearly explained!!!

-

0:44

0:44

what is self attention in transformer neural networks?

-

8:37

8:37

transformers - part 7 - decoder (2): masked self-attention

-

15:02

15:02

self attention in transformer neural networks (with code!)

-

15:01

15:01

illustrated guide to transformers neural network: a step by step explanation

-

32:59

32:59

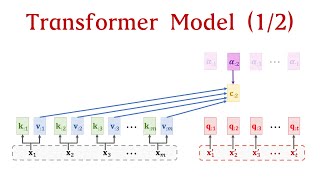

transformer model (1/2): attention layers

-

0:45

0:45

why masked self attention in the decoder but not the encoder in transformer neural network?

-

0:43

0:43

transformers | what is attention?

-

20:12

20:12

how do transformers work? (attention is all you need)

-

10:13

10:13

key query value attention explained

-

0:45

0:45

cross attention vs self attention

-

15:22

15:22

fastformer: additive attention can be all you need | paper explained