l19.0 rnns & transformers for sequence-to-sequence modeling -- lecture overview

Published 3 years ago • 6.1K plays • Length 3:05Download video MP4

Download video MP3

Similar videos

-

17:44

17:44

l19.1 sequence generation with word and character rnns

-

22:36

22:36

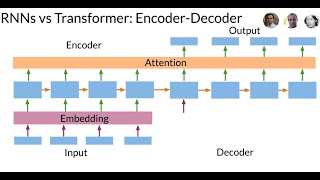

l19.5.1 the transformer architecture

-

22:19

22:19

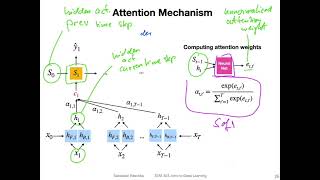

l19.3 rnns with an attention mechanism

-

6:28

6:28

transformers vs recurrent neural networks (rnn)!

-

3:59

3:59

l15.0: introduction to recurrent neural networks -- lecture overview

-

13:40

13:40

l15.2 sequence modeling with rnns

-

16:11

16:11

l19.4.1 using attention without the rnn -- a basic form of self-attention

-

18:41

18:41

¿qué es un transformer? la red neuronal que lo cambió todo!

-

15:51

15:51

attention for neural networks, clearly explained!!!

-

8:55

8:55

how ais, like chatgpt, learn

-

15:01

15:01

illustrated guide to transformers neural network: a step by step explanation

-

5:34

5:34

attention mechanism: overview

-

24:51

24:51

attention for rnn seq2seq models (1.25x speed recommended)

-

16:50

16:50

sequence-to-sequence (seq2seq) encoder-decoder neural networks, clearly explained!!!

-

36:15

36:15

transformer neural networks, chatgpt's foundation, clearly explained!!!

-

14:33

14:33

nlp lecture 6 - introduction to sequence-to-sequence modeling

-

8:38

8:38

transformers: the best idea in ai | andrej karpathy and lex fridman

-

0:51

0:51

bert networks in 60 seconds

-

5:55:34

5:55:34

sequence models complete course

-

1:00

1:00

bert vs gpt