l19.3 rnns with an attention mechanism

Published 3 years ago • 18K plays • Length 22:19Download video MP4

Download video MP3

Similar videos

-

16:11

16:11

l19.4.1 using attention without the rnn -- a basic form of self-attention

-

5:34

5:34

attention mechanism: overview

-

16:09

16:09

l19.4.2 self-attention and scaled dot-product attention

-

3:05

3:05

l19.0 rnns & transformers for sequence-to-sequence modeling -- lecture overview

-

22:36

22:36

l19.5.1 the transformer architecture

-

1:01:31

1:01:31

mit 6.s191: recurrent neural networks, transformers, and attention

-

26:10

26:10

attention in transformers, visually explained | chapter 6, deep learning

-

48:06

48:06

transformers are rnns: fast autoregressive transformers with linear attention (paper explained)

-

7:37

7:37

l19.4.3 multi-head attention

-

17:44

17:44

l19.1 sequence generation with word and character rnns

-

6:28

6:28

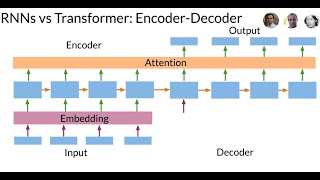

transformers vs recurrent neural networks (rnn)!

-

24:51

24:51

attention for rnn seq2seq models (1.25x speed recommended)

-

4:30

4:30

attention mechanism in a nutshell

-

5:50

5:50

what are transformers (machine learning model)?

-

1:02:50

1:02:50

mit 6.s191 (2023): recurrent neural networks, transformers, and attention

-

15:01

15:01

illustrated guide to transformers neural network: a step by step explanation

-

1:00

1:00

bert vs gpt

-

9:11

9:11

transformers, explained: understand the model behind gpt, bert, and t5

-

15:02

15:02

self attention in transformer neural networks (with code!)

-

6:10

6:10

l19.5.2.7: closing words -- the recent growth of language transformers

-

58:04

58:04

attention is all you need (transformer) - model explanation (including math), inference and training

-

0:18

0:18

transformers | basics of transformers